The light is the first thing you notice. Not the sunlight streaming through the kitchen window, but the blue, synthetic glow reflecting off the face of a thirteen-year-old who hasn’t blinked in nearly a minute. Her thumb moves in a rhythmic, Pavlovian twitch. Swipe. Pause. Swipe. It is a motion so repetitive it looks mechanical, yet the chemical storm happening inside her brain is anything but.

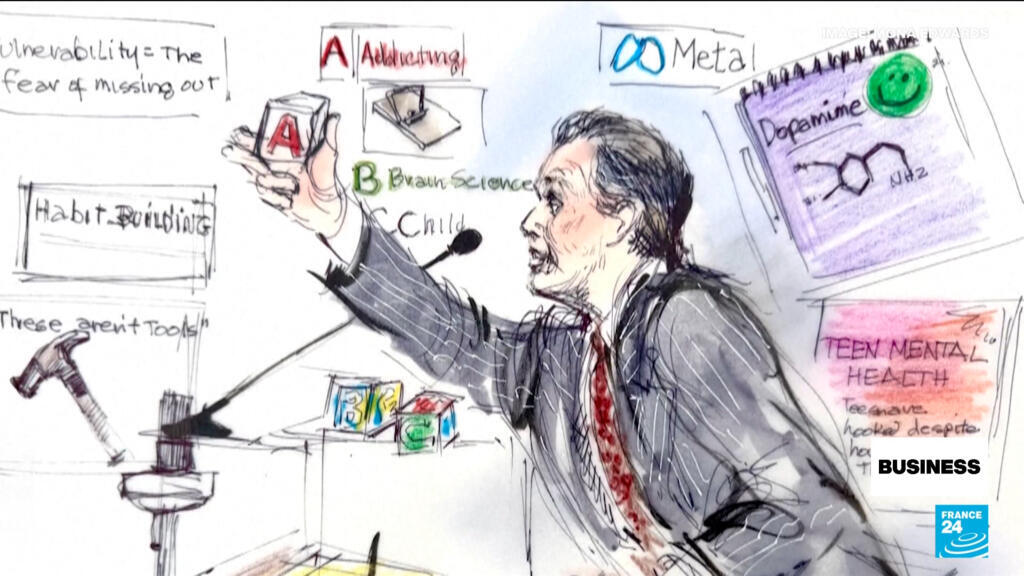

In a quiet courtroom in California, a series of legal filings are attempting to peel back the polished glass of our smartphones to reveal the gears turning underneath. The accusation isn't just that social media is "distracting" or "a bit much." The lawsuits, brought by hundreds of school districts and thousands of families, allege something far more clinical. They argue that the titans of Silicon Valley—Meta, ByteDance, Alphabet, and Snap—deliberately engineered their platforms to bypass the human will.

They are accused of building digital slot machines for a generation that hasn't yet developed the cognitive brakes to stop playing.

The Architecture of a Craving

To understand why this is a legal battle and not just a parenting struggle, we have to look at the blueprints. Software engineers don't just write code; they write behavior. Consider the "Infinite Scroll." Before 2006, the internet had pages. You reached the bottom, you decided whether to click "Next," and that micro-second of friction gave your brain a chance to ask: Do I actually want to keep doing this?

By removing the "Next" button, designers removed the pause. Now, the feed is a bottomless glass of water. You keep drinking because you never see the bottom.

Then there are the "Variable Rewards." This is the psychological engine of the casino. If a slot machine paid out five dollars every single time you pulled the lever, you would eventually get bored and walk away. But if it pays out nothing, then nothing, then a hundred dollars, then nothing—your brain enters a state of high-alert craving. You don't know when the "win" is coming, so you cannot stop looking for it.

On a social media app, that win is a Like, a flame emoji, or a viral comment. For a teenager whose prefrontal cortex—the part of the brain responsible for impulse control—won't be fully "wired" until their mid-twenties, this isn't a fair fight. It’s like putting a toddler behind the wheel of a Ferrari and then blaming them for speeding.

The Ghost in the Classroom

Talk to any middle school teacher and they will tell you about the "phantom vibration." Students sit at their desks, their legs bouncing, their hands instinctively reaching for pockets that are supposed to be empty. Even when the phone is turned off, the brain is still processing the potential of what is happening inside the screen.

The lawsuits allege that this isn't an accidental side effect of a popular product. They point to internal documents suggesting that companies knew their algorithms were pushing "rabbit hole" content to vulnerable minors—content that often glorified disordered eating, self-harm, or extreme body dysmorphia.

Imagine a hypothetical boy named Leo. Leo is fourteen and feeling lonely. He watches one video about "how to be more alpha" or "why I’m sad." The algorithm, which is optimized for "Engagement Time" above all else, doesn't care if the content makes Leo healthier. It only cares if the content keeps Leo's eyes on the screen. It feeds him ten more videos, each more extreme than the last, because outrage and sadness are stickier than contentment.

Within a week, Leo’s entire worldview has been curated by a mathematical formula designed by people in Menlo Park who have never met him. The stakes are no longer just about "screen time." They are about the fundamental construction of a child’s reality.

The Defense of the Algorithm

The tech giants have a scripted response. They argue that they provide tools for connection, that they have implemented "parental controls," and that they are protected by Section 230—a law that generally says platforms aren't responsible for what users post.

But the plaintiffs are getting clever. They aren't suing over the content of the posts. They are suing over the design of the product. They are arguing that the "Streaks" on Snapchat or the "Auto-play" on YouTube are defective product features, no different from a car with brakes that fail or a toy painted with lead.

"We provide a service," the corporate defense implies. "The parents provide the supervision."

This argument feels increasingly thin to the families who have watched their children withdraw into a digital shell. It’s hard to supervise a ghost. It’s hard to compete with a thousand of the world’s smartest engineers working around the clock to capture your daughter's attention.

The Cost of a "Like"

Statistics are often used to dull the senses, but here, they are screams in the dark. Since the widespread adoption of the smartphone around 2012, rates of depression, anxiety, and emergency room visits for self-harm among adolescent girls have surged.

Correlation is not always causation, but when you see a cliff-edge drop in adolescent well-being that perfectly aligns with the introduction of the "Like" button and the front-facing camera, the burden of proof begins to shift.

The legal battle is essentially asking a single, uncomfortable question: Is a billion-dollar profit margin worth a generation’s peace of mind?

The companies argue that they are simply "fostering community." But look at a group of teenagers sitting together at a mall. They are in the same physical space, yet they are miles apart, each locked in their own private, algorithmic silo. The "community" is a simulation. The "connection" is a series of data points sold to the highest bidder.

The Silent Shift in the Living Room

This isn't just happening in courtrooms. It’s happening in the quiet moments of the evening when a father realizes his son hasn't looked him in the eye for three days. It’s happening when a mother finds her daughter crying over a filtered photo of a stranger three thousand miles away.

We are currently living through the largest social experiment in human history, conducted without a control group and without informed consent. We gave children a device that can access the sum of all human knowledge, but we forgot that it also gives the sum of all human toxicity access to them.

The court cases will drag on for years. There will be appeals, motions to dismiss, and endless debates over the nuances of software liability. But the cultural verdict is already being written in the bedrooms and classrooms of the world.

We are beginning to realize that "free" apps are the most expensive things we have ever owned.

The blue light eventually turns off. The teenager finally falls into a fitful sleep, her phone charging inches from her pillow. In the silence, the device sits there, waiting. It doesn't need to sleep. It doesn't have a conscience. It just has an objective: to wait for the first twitch of a thumb in the morning, ready to start the cycle all over again.

The real trial isn't happening in California. It’s happening every time we decide whether to pick up the phone, or finally, mercifully, put it down.