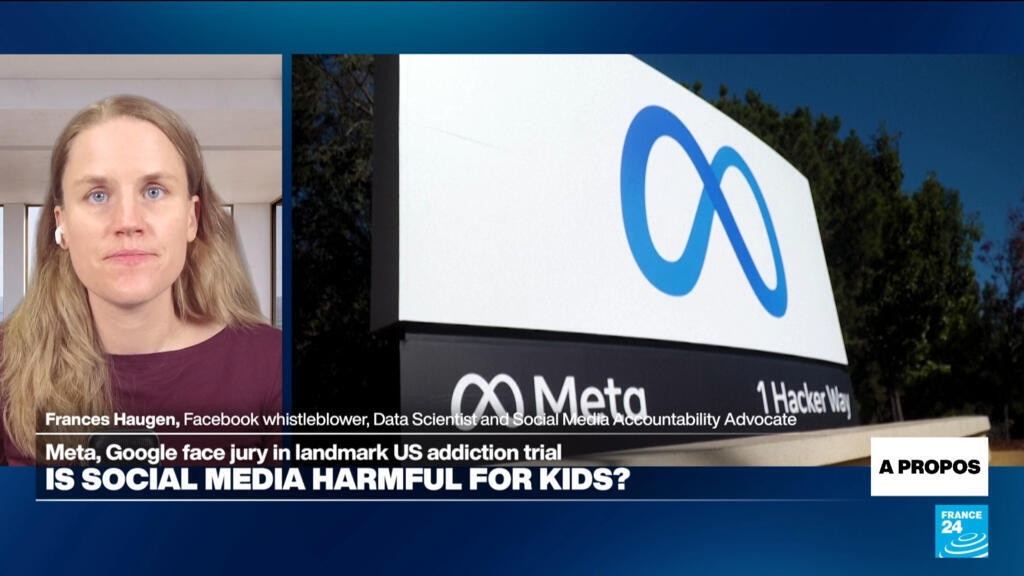

The internal documents were never supposed to leave the server farms of Menlo Park and Mountain View. For years, the architects of the modern social internet maintained a polished veneer of "connecting the world" and "building community." But inside a Los Angeles courtroom this February, that veneer has been stripped away, replaced by a devastating paper trail that suggests a different corporate mission: the deliberate, neurochemical subjugation of a generation.

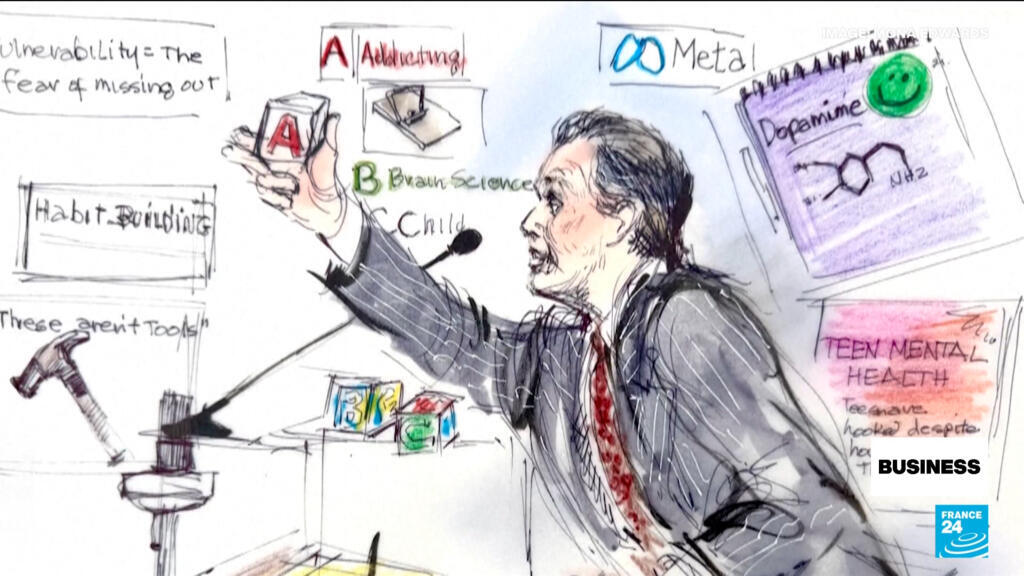

The landmark trial, K.G.M. v. Meta, has moved past the stage of abstract grievances. It is a forensic autopsy of product design. As jury selection concluded and opening statements tore through the quiet of the Los Angeles Superior Court, the case against Meta and Alphabet (YouTube) morphed from a simple negligence claim into an indictment of what plaintiffs call "the trap."

The core of the legal argument is deceptively simple. Plaintiffs argue that social media platforms are not neutral town squares. Instead, they are high-frequency psychological machines designed to exploit the "reward deficiency" of the developing adolescent brain. Within the first hour of testimony, the courtroom heard how features like infinite scroll, autoplay, and ephemeral "streaks" were not accidental innovations. They were calculated responses to internal research showing that a teenager’s need for social validation is effectively a biological toggle switch.

The Dopamine Arms Race

To understand the trial, one must understand the biology of the "Like" button. Human brains are wired to seek rewards. In adults, the prefrontal cortex—the brain’s braking system—is usually strong enough to moderate these impulses. In children, the accelerator (the ventral striatum) is fully functional, while the brakes are still being installed.

During the trial’s opening week, internal Meta studies—part of the "Project Myst" disclosure—revealed that the company knew exactly how vulnerable this made its youngest users. One document compared the "intermittent reinforcement" of notifications to the mechanics of a slot machine. A user pulls the lever (swipes down to refresh) and sometimes gets a prize (a like or a comment). The unpredictability is what creates the compulsion. If you won every time, you would get bored. If you never won, you would quit. By winning sometimes, the brain remains in a state of permanent, restless anticipation.

This is not just "heavy use." This is neurobiological hijacking.

The defense has countered by leaning on the lack of a formal "social media addiction" diagnosis in the DSM-5. Their lawyers argue that "addiction" is a loaded term being used to pathologize normal teenage behavior. They claim that the burden of moderation lies with the parents, not the platform. It is a classic strategy, reminiscent of the tobacco trials of the 1990s: emphasize individual choice while ignoring the fact that the product was engineered to erode the capacity for that very choice.

The Cost of Engagement

The human cost of this engineering is represented by the lead plaintiff, known as K.G.M. Now 20, she began using YouTube at age six and Instagram at nine. By the time she was in middle school, her digital footprint was a frantic map of a child seeking anchor in a sea of algorithmic feedback. Her legal team presented 10,000 pages of medical records documenting a descent into depression, anxiety, and body dysmorphia—all allegedly fueled by a feed that recognized her insecurities and fed them back to her to keep her on the app.

This is where the "engagement" metric becomes sinister. For a social media company, a "successful" user is one who stays on the platform. If a 13-year-old girl is feeling insecure about her body, an algorithm designed for maximum retention will discover that she spends more time looking at "thinspiration" content than anything else. To the algorithm, this is not a crisis; it is a signal. It will serve more of that content to ensure she doesn't close the app.

Internal emails shown to the jury suggest that Meta employees flagged these "vulnerability loops" years ago. One employee noted that Instagram was "basically a pusher" for social validation. Yet, the public-facing response was always to introduce "tools for parents" that the company’s own internal data suggested were largely ineffective.

The Section 230 Shield is Cracking

For decades, Big Tech has hidden behind Section 230 of the Communications Decency Act. This law generally protects platforms from being sued for the content users post. If someone posts a libelous comment on Facebook, you sue the poster, not Facebook.

However, the Los Angeles trial and a parallel federal multidistrict litigation (MDL) in Oakland are testing a brilliant legal pivot. The plaintiffs aren't suing over the content. They are suing over the product design.

The argument is that the algorithm itself is a product. The infinite scroll is a product. The notification timing is a product. If a car manufacturer builds a car with a defective brake system, they can't blame the driver for where they chose to drive. Similarly, the plaintiffs argue that the platforms are "defectively designed" to be addictive.

Judge Carolyn B. Kuhl’s recent ruling allowing these "failure to warn" and "negligent design" claims to proceed is a massive blow to the industry's immunity. It suggests that while the companies might not be responsible for what is said on their platforms, they are absolutely responsible for the mechanism they use to deliver it.

The Settlement Ripple Effect

While Meta and Alphabet are digging in for a long fight, others have already blinked. Snap Inc. and TikTok settled with the K.G.M. plaintiffs just days before the trial began. The terms remain confidential, but the message is clear: the risk of a public jury verdict is becoming too high to ignore.

In the UK and EU, regulators are not waiting for the courts. Ofcom has already begun finalizing child safety measures under the Online Safety Act, which will force platforms to filter "harmful" content from children’s feeds by July 2026. The era of the "unfiltered" child internet is effectively over.

But for the thousands of families represented in the US lawsuits, these regulatory wins are too late. They are looking for more than just a change in the code; they are looking for an admission of what was done.

A Generation as a Lab Rat

The defense continues to argue that social media provides "connection and community." They point to marginalized youth who find support online. They aren't entirely wrong. But the trial isn't about the existence of the platforms; it’s about the predatory nature of their optimization.

There is a fundamental difference between a library and a casino. A library is a resource you use and then leave. A casino is an environment designed to make you lose track of time, space, and your own better judgment. By treating the adolescent brain as a variable to be optimized for ad revenue, Silicon Valley didn't just build a better social network. They conducted a live, global psychological experiment on an entire generation without their consent.

As the trial moves into its second month, the focus will shift to "causality." The tech giants will bring in their own experts to argue that the rise in teen suicide and depression is linked to everything except their apps—economic anxiety, climate change, or the breakdown of the nuclear family. They will try to bury the "ABC" of the prosecution (Addicting the Brains of Children) under a mountain of statistical noise.

The jury will eventually have to decide: is the modern mental health crisis a tragic coincidence, or is it the inevitable result of a business model that treats human attention as a resource to be mined, regardless of the ecological damage to the mind?

Would you like me to analyze the specific design features mentioned in the trial—like "variable reward schedules"—and explain how they specifically trigger the ventral striatum?**