The American courtroom has become the new dumping ground for parental failure.

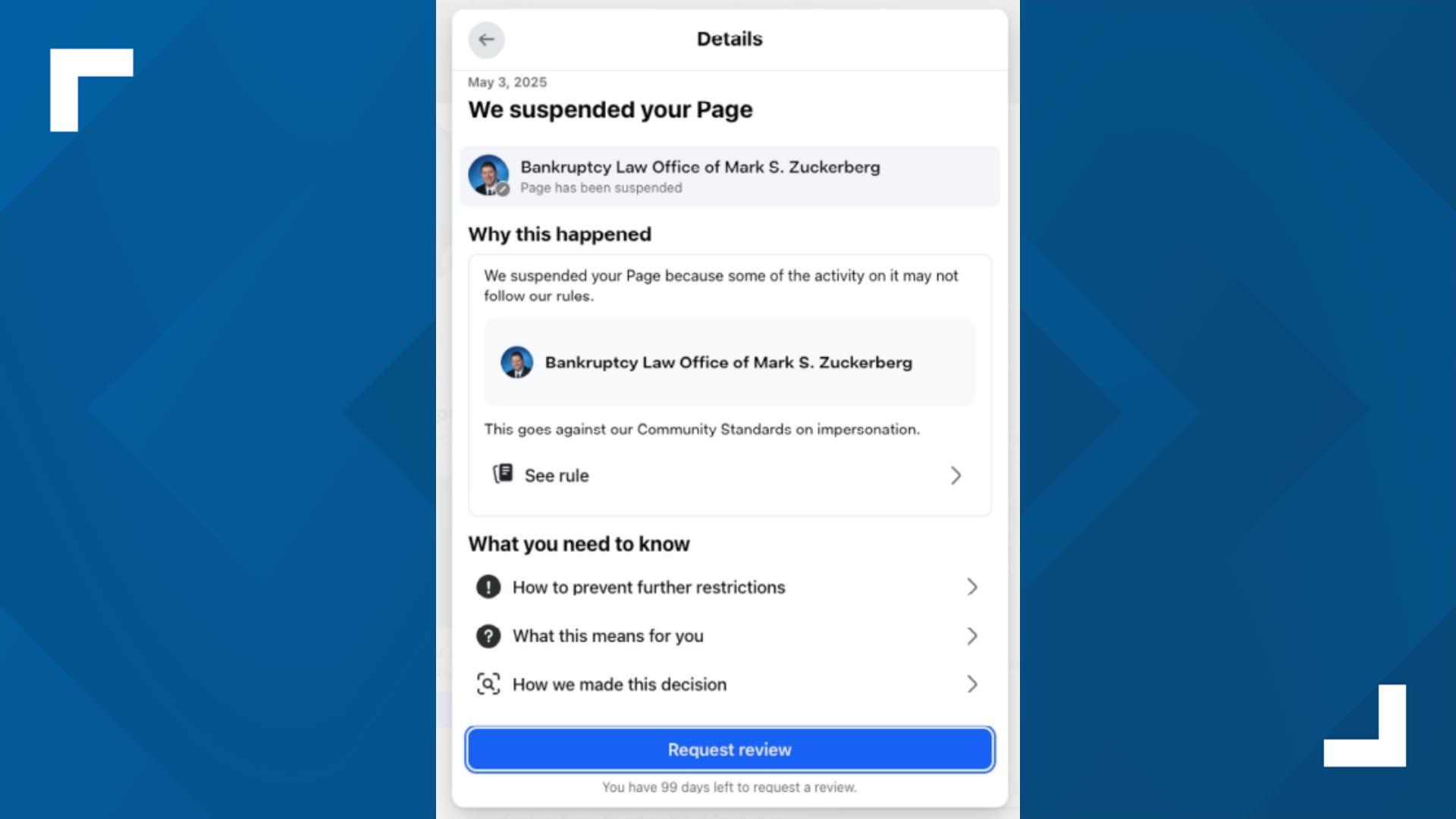

Last week in Los Angeles, Mark Zuckerberg sat on a witness stand, enduring a predictable grilling about whether Instagram is a "defective product" designed to addict children. The plaintiff, a 20-year-old identified as K.G.M., claims she was "hooked" by age nine, leading to years of depression and body dysmorphia. The legal theater is high-stakes, but the premise is intellectually bankrupt. We are witnessing a massive, coordinated attempt to litigate away the consequences of passive parenting.

The Myth of the Passive Victim

The "addiction-machine" theory rests on a convenient lie: that children are helpless biological automatons with zero agency, and parents are mere spectators to a digital kidnapping.

In reality, the data presented in the Los Angeles trial reveals a different story. Meta’s defense pointed out that K.G.M. faced significant mental health challenges long before she ever opened an app. This isn't just a legal "gotcha"; it's a reflection of the Pre-existing Vulnerability Gap.

Social media doesn't create mental illness out of thin air. It accelerates what is already there. If a child is already struggling with social phobia or family instability, a smartphone becomes a tool for avoidant coping.

"The teen would often turn to her phone to make it look as if she were ‘doing something rather than sitting and being perceived as having no friends’," testified the plaintiff's own former therapist.

That isn't a "defective algorithm." That is a lonely child using a tool to hide her loneliness. Suing the tool-maker for the child's isolation is like suing a mirror manufacturer because you don't like the reflection.

The Dopamine Deception

Lawyers love to use the "slot machine" analogy. They claim infinite scroll and "likes" are engineered to hijack the brain's reward system.

Here is the nuance the "lazy consensus" misses: Everything worth doing is dopamine-driven.

- Reading a book you love? Dopamine.

- Winning a soccer game? Dopamine.

- Getting a "Good Job" sticker in second grade? Dopamine.

We have pathologized digital engagement because it’s easier than competing with it. If a child prefers the "unpredictable rewards" of a TikTok feed to the predictable boredom of their living room, that isn't a failure of engineering. It’s a failure of the environment.

We are asking a jury to define "problematic use" when the scientific community can't even agree if "social media addiction" is a clinical diagnosis. As Instagram head Adam Mosseri argued during his testimony, high usage is often just "watching TV for longer than you feel good about." It’s a vice, not a virus.

The Age Verification Farce

Zuckerberg was hammered on the stand for failing to keep under-13s off his platforms. He rightly noted, "I don't see why this is so complicated."

It’s complicated because parents are the ones helping their kids lie. A national study published in Academic Pediatrics found that nearly 64% of children under 13 have a social media account. 90% of those kids aren't hiding it from their parents.

Let’s be brutally honest:

- Parents buy the $1,000 iPhone.

- Parents provide the high-speed Wi-Fi.

- Parents ignore the "13+" age rating because it’s a convenient digital pacifier.

- Parents sue when the pacifier works too well.

I’ve seen this play out in Silicon Valley and across the country. We want the convenience of a connected child—tracking their GPS, calling them after practice—without the "robust" work of monitoring their digital intake.

The Section 230 Smoke Screen

The legal strategy in L.A. is to bypass Section 230 by claiming the design of the app is the problem, not the content. It’s a clever semantic trick. They argue that features like "Like" counts and beauty filters are product defects.

If a beauty filter is a defect, then so is a push-up bra or a tube of concealer. These are tools of self-expression and, yes, social signaling. The "harm" occurs when a user has a fragile sense of self.

Imagine a scenario where we sue Ford because a teenager used a Mustang to drag race. We don't call the car "defective" because it’s fast; we blame the driver (and the person who gave them the keys) for the misuse of power.

Why the Lawsuits Will Fail Even if They Win

Even if a jury hits Meta with a billion-dollar verdict, it won't solve the "crisis."

- Regulation creates a vacuum. If you ban Instagram for teens, they move to decentralized, unmoderated platforms where the risks are 10x higher.

- Litigation is lagging. By the time this trial concludes, the "addictive" features of 2024 will be replaced by AI-driven companions that are even more engaging.

- Responsibility is non-transferable. You cannot litigate a CEO into being a father or a mother.

The "Kids Off Social Media Act" and similar legislative pushes are just more attempts to deputize Big Tech as a national nanny. They require age verification that destroys digital privacy for everyone, all because some parents can't say "no" to a ten-year-old.

The Hard Truth

The solution isn't a courtroom victory. It’s a culture shift.

Stop looking for "unveiled" internal documents as proof of a conspiracy. The "conspiracy" is that these companies want people to use their products. That is the definition of a successful business.

If you want your child to be "un-addicted," give them something better to do. If you can't compete with an algorithm, that’s an indictment of your household, not Zuckerberg’s code.

The L.A. trial isn't about protecting children. It’s about absolving adults.

Would you like me to pull the latest 2026 data on how age-verification laws are actually impacting user privacy across the states?