The headlines are screaming about a "landmark" victory. Judges are nodding. Parents are exhaling a collective sigh of relief because they think they finally found a scapegoat for the dinner-table silence. The recent U.S. court rulings against Meta and Google, alleging their algorithms are engineered to hook minors, are being treated like the Big Tobacco moment of the 2020s.

It is a comforting narrative. It is also a total fantasy.

Assigning legal liability to a software company for the "addiction" of a teenager is the ultimate exercise in passing the buck. We are litigating the symptoms of a fundamental shift in human biology and social architecture, hoping a billion-dollar fine will somehow restore a pre-iPhone innocence that no longer exists. If you think a courtroom win will change the neurological reality of your kid’s brain, you are playing the wrong game.

The Algorithmic Boogeyman is Just Math

The central argument in these lawsuits is that platforms like TikTok and Instagram use "predatory" algorithms. Lawyers talk about these systems as if they are sentient monsters hiding in the wires, specifically designed to ruin a fourteen-year-old's dopamine receptors.

Let’s get real. An algorithm is a feedback loop. It is a mirror. It shows the user more of what they interact with. If a teenager spends four hours watching "sad girl" edits or extreme fitness reels, the algorithm isn’t "forcing" them to stay; it is reacting to the data they provide.

We’ve reached a point where we want to criminalize relevance.

I’ve spent fifteen years watching product teams optimize engagement. They aren’t sitting in a boardroom twirling mustaches. They are trying to solve the problem of choice paralysis. In a world of infinite content, a "dumb" feed is a dead product. By litigating the algorithm, we are essentially saying that software shouldn't be good at its job. If the software is effective at keeping you interested, it’s "addictive." If it’s bad at it, it’s a failure. There is no middle ground in the current legal framework that doesn't involve neutering the very utility of the internet.

The Tobacco Comparison is Lazy and Dangerous

The most frequent talking point is that "social media is the new cigarettes." This is a spectacular misunderstanding of both biochemistry and economics.

Cigarettes are a physical delivery system for a chemical compound, nicotine, which has no functional utility other than its own consumption. Social media is a communication tool. Comparing an Instagram feed to a Marlboro Red is like comparing a telephone to a syringe.

- Cigarettes provide a physiological hook with a 100% predictable health outcome (lung damage).

- Social Media provides a psychological hook with a variable outcome based entirely on the content consumed and the predisposition of the user.

When we use the tobacco analogy, we ignore the agency of the individual and the responsibility of the environment. You don't "use" a cigarette to build a business, learn a language, or maintain a long-distance relationship. You do all of those things on the platforms currently being sued. By treating social media as a pure toxin, the courts are ignoring the nuance of digital literacy. We are trying to ban the ocean because some people don't know how to swim.

The Parent Trap

Here is the truth no one wants to hear: The "addiction" isn't a tech problem. It’s a parenting and infrastructure problem.

We have spent twenty years dismantling the "third places" where teenagers used to congregate. Malls are dying. Parks are empty. Curfews are tighter. We have effectively imprisoned an entire generation in their bedrooms and then acted shocked when they turned to the only window they have left—the screen.

I have seen parents hand a toddler an iPad to keep them quiet at a restaurant and then, ten years later, join a class-action lawsuit because that same child can’t put the phone down. You cannot outsource the moral and digital development of a human being to a Silicon Valley corporation and then sue them when the result isn't a well-adjusted Harvard grad.

The court cases focus on "design features" like infinite scroll and push notifications.

- Infinite Scroll: A UI choice to reduce friction.

- Push Notifications: A tool for real-time updates.

Are these distracting? Yes. Are they "defective products"? No more than a 24-hour diner or a library with too many books is a "defective" establishment. The responsibility for setting boundaries has shifted from the home to the courthouse, and that is a recipe for a permanent state of victimhood.

The Hidden Cost of "Winning"

Let’s imagine a scenario where these lawsuits actually succeed in their wildest dreams. The courts force Meta and Google to "de-addict" their platforms. What does that look like?

- Identity Verification: To protect minors, every user will have to upload a government ID. Say goodbye to any semblance of online anonymity.

- The "Boring" Feed: Platforms will be forced to use chronological feeds that don't learn from user behavior. You’ll see every boring update from your high school acquaintance and none of the content that actually inspires you.

- The End of Free: If engagement drops, ad revenue drops. If ad revenue drops, these platforms become subscription-based. We are headed toward a "Digital Divide 2.0," where only the wealthy can afford "safe" or premium social spaces, while the rest of the world is left with the digital equivalent of a toxic wasteland.

The litigation assumes that we can return to a 1995 version of the internet if we just sue the right people. We can’t. The bell cannot be un-rung.

The Dopamine Myth

Everyone loves to throw around the word "dopamine" like they’re a neuroscientist. "The apps are hacking our dopamine!"

Everything hacks your dopamine. Winning a board game hacks your dopamine. Getting a good grade hacks your dopamine. Seeing a friend you like hacks your dopamine. The problem isn't the presence of dopamine; it's the lack of friction.

The courts are trying to legislate friction. They want to force companies to build "speed bumps" into their software. But humans are incredibly good at bypassing friction when they want a reward. If you make Instagram boring, kids will move to a platform that hasn't been sued yet. If you ban that one, they’ll go to a decentralized app where there is no CEO to subpoena and no corporate office to picket.

We are chasing ghosts while the real issue—a lack of purpose and community in the physical world—remains unaddressed.

The Actionable Truth

If you want to protect the next generation, stop looking at the Supreme Court and start looking at the router.

- Audit the Environment: If a kid is on a phone for eight hours, it’s usually because the physical world around them offers zero competitive stimulation.

- Teach the Mechanics: Instead of telling kids "social media is bad," show them how the auction system works. Show them how their attention is the product being sold to advertisers. Knowledge is a better shield than a court order.

- Accept the Trade-off: We traded the boredom of the 20th century for the overstimulation of the 21st. You don't get the benefits of global connectivity without the risks of digital noise.

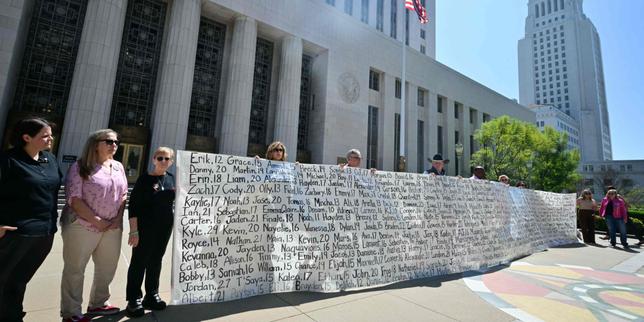

These lawsuits are a massive redistribution of wealth from tech companies to law firms. They will produce thousands of billable hours, hundreds of headlines, and exactly zero changes in how a teenager’s brain processes a notification.

The gavel won't save your kids. You have to do that yourself.