The frontier of artificial intelligence is no longer protected by math or encryption. It is being bled dry by a process known as model distillation, a sophisticated form of intellectual property theft that turns a billion-dollar investment into a free blueprint for competitors. Recently, Anthropic joined OpenAI in sounding the alarm on massive, systematic campaigns by Chinese firms to siphon the "intelligence" out of Western models. This isn't just a data leak. It is a fundamental shift in the global power balance of compute, where the giants of Silicon Valley build the engines and their rivals in Beijing strip them for parts.

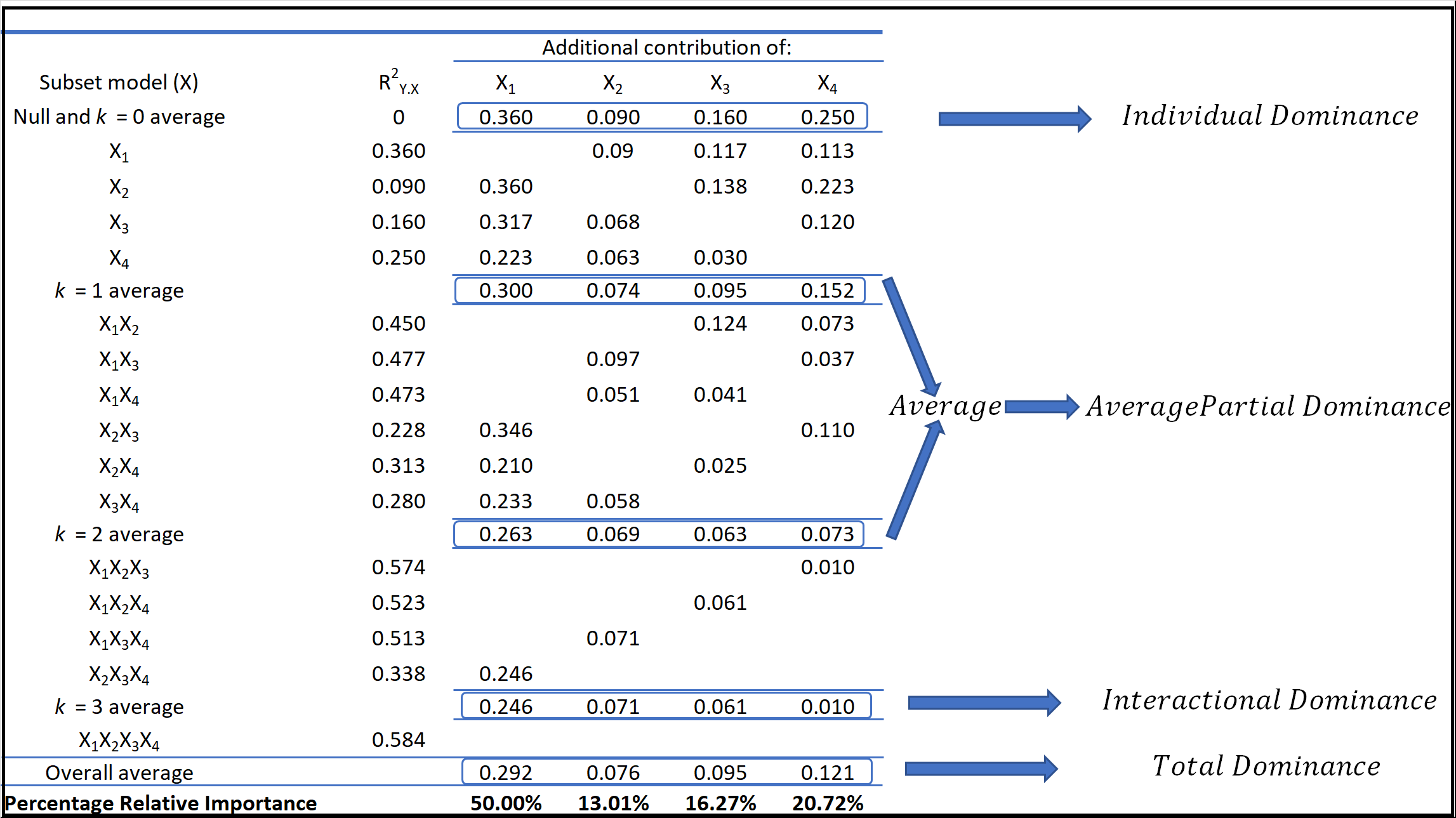

The premise is straightforward but devastating. Instead of spending $100 million training a model from scratch, a "student" model watches how a "teacher" model—like Claude or GPT-4—responds to millions of complex queries. By analyzing these outputs, the smaller model mimics the reasoning patterns and logic of the leader. It effectively steals the weights and biases of the original model without ever seeing its code.

The Infrastructure of the Siphon

For years, the industry treated distillation as a legitimate research tool. It was a way to make models smaller and faster so they could run on a smartphone instead of a massive server farm. But the scale has shifted. We are now seeing "industrial-scale" distillation, where Chinese tech giants and well-funded startups use vast arrays of automated accounts to pepper Western APIs with questions designed to map their internal logic.

These aren't random users asking for poems. They are sophisticated scripts generating synthetic datasets. This process allows a firm like ByteDance or Alibaba to bypass the grueling trial-and-error phase of AI development. They are essentially using Claude’s own brain to teach their homegrown models how to think.

The economics are lopsided. A company like Anthropic pours years of research and massive amounts of capital into "alignment"—the process of making an AI safe, helpful, and honest. A competitor using distillation gets those safety guardrails and logical frameworks for the price of an API subscription. They are harvesting the fruits of Western R&D for pennies on the dollar.

Detection is a Game of Shadows

Identifying a distillation campaign is notoriously difficult. How do you distinguish between a power user and a bot designed to steal your logic?

Anthropic and OpenAI have begun deploying "canary" tokens and specialized monitoring systems. These systems look for patterns in prompts that are too structured, too repetitive, or too focused on probing the edges of the model’s knowledge. If a single entity asks 50,000 variations of a logic puzzle, they aren't looking for an answer. They are looking for the underlying formula.

The problem is that the "thieves" are getting smarter. They rotate IP addresses. They use "low and slow" attacks that mimic human typing speeds. They mix their probing questions with mundane tasks to hide their tracks. It’s a digital shell game where the stakes are the future of the most valuable technology on earth.

The Compute Gap Paradox

There is a bitter irony in this struggle. The United States has spent the last three years tightening export controls on high-end Nvidia chips, hoping to starve Chinese AI development of the hardware it needs to compete. However, distillation works as a force multiplier for inferior hardware.

If you have a massive "teacher" model to guide you, you don't need a massive cluster of H100 GPUs to find the right path. You just need enough power to follow the map someone else already drew. By distilling the world’s best models, Chinese firms are effectively neutralizing the impact of US hardware sanctions. They are making their limited compute go much further by eliminating the waste of the training process.

The Myth of the Moat

For a long time, the prevailing wisdom in Silicon Valley was that "data is the new oil" and that having the largest dataset created an unassailable moat. That theory is dead.

In the age of distillation, the moat is drying up. If your model is accessible via an API, it is vulnerable. This creates a massive strategic dilemma for companies like OpenAI, Google, and Anthropic. To make money, they must sell access to their models. But every time they sell access, they provide the very tools their competitors need to replace them.

We are seeing a move toward "closed-loop" ecosystems. Some companies are considering limiting API access for certain regions or implementing aggressive rate limits on accounts that exhibit suspicious "probing" behavior. But these are stopgap measures. The nature of LLMs means that their intelligence is inherently exportable through their words.

A New Era of Corporate Espionage

This isn't the traditional spy-movie theft where someone walks out with a thumb drive. This is "black box" reverse engineering at a scale never before seen in industrial history.

Consider a hypothetical example. A firm wants to build a medical AI but lacks the billions of clinical data points required. Instead, they prompt a leading Western AI with millions of medical cases, asking it to diagnose and explain its reasoning. The firm then takes those millions of high-quality explanations and uses them to train their own local model. The local model eventually becomes nearly as good as the original, despite the firm never having a single original medical record.

This "synthetic data" loop is the primary engine of the current AI boom in China. It allows them to leapfrog the data-collection hurdles that took Western companies a decade to clear.

The Policy Failure

The policy response has been sluggish. Regulators are still arguing about "AI safety" and "bias" while the core intellectual property of the most important companies in the West is being drained through an open tap.

There is currently no international legal framework that adequately covers model distillation. Is it "fair use" to train a model on the outputs of another? Most legal experts say the current laws are ill-equipped to handle this. If I read a book and learn how to write like the author, I haven't committed a crime. If a machine does it a billion times in a weekend, the result is the same, but the economic impact is a total collapse of the author’s market value.

The tech giants are now lobbying for stricter "know your customer" (KYC) rules for cloud providers. They want to treat high-end compute and API access like a banking transaction, requiring verified identities to prevent shell companies from running distillation scripts.

The Internal Cost of Defense

Defending against distillation also degrades the product. To catch the "thieves," companies have to monitor user queries with an intensity that raises serious privacy concerns.

Furthermore, if a model is "watermarked" or its outputs are subtly altered to prevent distillation, it may become less useful for legitimate users. We are entering a phase where the "user experience" is being sacrificed at the altar of "IP protection." This creates an opening for open-source models, which don't care about distillation, to gain ground simply because they don't have the "defensive overhead" of the proprietary giants.

The Open Source Wildcard

While Anthropic and OpenAI are hunkering down, the open-source movement—led by Meta’s Llama series—is complicating the narrative. Mark Zuckerberg’s strategy of releasing high-powered weights for free makes the distillation efforts of Chinese firms almost redundant. Why steal from a closed API when you can download a world-class model for free?

This puts Anthropic in a pincer movement. On one side, they are being "distilled" by state-backed competitors in the East. On the other, they are being commoditized by open-source projects in the West. The space for a high-priced, proprietary model is shrinking every day.

The Intelligence Arms Race

The battle over distillation is the first real skirmish in a much longer war over the "sovereignty of intelligence." If a nation can simply clone the cognitive capabilities of its rivals, then the advantage of being first is fleeting.

We are moving toward a world where the only thing that cannot be distilled is the "live" connection to real-world data and real-time compute. This is why we see a rush toward "Agentic AI"—systems that don't just talk, but actually do work in the real world. A model's "reasoning" can be stolen, but its integrated access to a specific company’s private database or its ability to execute code in a secure environment is much harder to copy.

The strategy for survival is no longer about having the "smartest" model. It is about having the most "useful" ecosystem. The model is becoming a commodity; the integration is the product.

Companies that fail to realize this will find themselves at the end of a very long, very expensive road, holding a "top tier" model that has already been cloned, refined, and redistributed by a dozen competitors who didn't pay a cent for the research. The era of the "AI secret" is over; the era of the "AI utility" has begun.

You cannot lock a mind inside a server if you are also charging people to talk to it. Every word the model speaks is a piece of its soul being sold to the highest—or sneakiest—bidder. The only way to win is to move faster than the shadow chasing you.