The pearl-clutching over AI-generated political ads has reached a fever pitch. If you believe the mainstream narrative, we are one deepfake away from the total collapse of the democratic process. Pundits scream about "misinformation" while tech giants scramble to slap warning labels on every pixel that looks too smooth. They want you to believe that artificial intelligence is a uniquely dangerous threat to the sanctity of our elections.

They are wrong.

In fact, the obsession with "detecting" AI ads is a massive distraction from the real problem: the voters themselves and the legacy media’s desperate need to remain relevant. AI isn't the poison; it’s the mirror. It is forcing us to confront the fact that political messaging has been a hall of mirrors for decades. The only difference now is the efficiency of the reflections.

The Myth of the Informed Voter

The loudest argument against AI in politics is that it "deceives" the public. This premise assumes there was a Golden Age of Political Truth that we are suddenly departing.

Let’s get real.

Political campaigning has always been about the strategic manipulation of reality. We’ve had airbrushed photos since the dawn of the camera. We’ve had "creative" video editing since the 1950s. We’ve had "push polling" and whisper campaigns that would make a generative model blush. To suggest that a 30-second spot featuring a synthetic voice is a revolutionary threat to truth is to ignore a century of propaganda.

The "informed voter" is largely a statistical ghost. Most people vote based on identity, tribalism, and gut feeling. Data from the American National Election Studies consistently shows that a significant portion of the electorate cannot name the three branches of government, let alone parse the nuances of a policy white paper. AI ads don't "break" the voter’s ability to discern truth; they simply accelerate the delivery of what the voter already wants to believe.

The Efficiency Paradox

Critics hate AI because it lowers the barrier to entry. They call it "weaponized content production." I call it the democratization of the ground game.

In the old world—meaning four years ago—running a high-quality ad campaign required a massive budget, a production crew, and weeks of lead time. This gave a massive advantage to incumbents and well-funded establishment candidates. AI wipes that out. Now, a grassroots challenger with a laptop and a clear message can produce professional-grade content for the cost of a monthly subscription.

We should be celebrating this. Instead, we see the "establishment" (both in politics and media) trying to regulate these tools into oblivion. Why? Because when anyone can create high-impact media, the gatekeepers lose their power. The outcry isn't about protecting the truth; it’s about protecting the moat.

Regulation is a Security Theater

Watch any congressional hearing on AI and you’ll see the same thing: octogenarians asking tech CEOs how the "internets" work. These are the people we expect to regulate synthetic media?

The current push for "watermarking" and "mandatory disclosure" is a joke.

- Bad actors don't follow rules. Do you think a foreign bot farm or a rogue PAC cares about a digital watermark?

- Watermarks are easily stripped. Any developer with a modicum of skill can bypass the "metadata" requirements that platforms like Meta or Google are trying to implement.

- Disclosure is ignored. Most voters don't look for the small print. If a video shows a candidate saying something that confirms a voter’s existing bias, that voter will share it regardless of a "Generated by AI" tag.

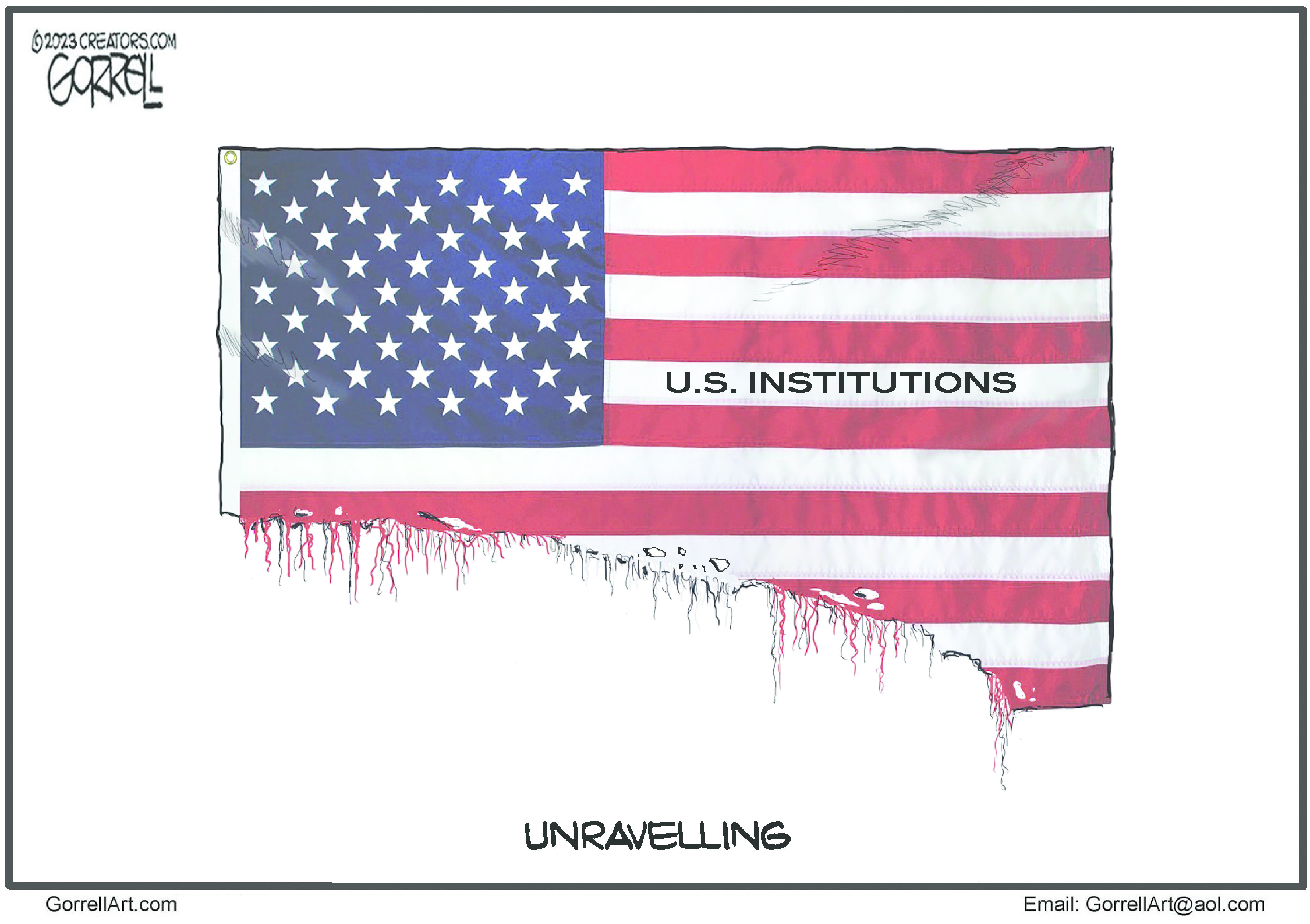

By focusing on the tool (AI), we are ignoring the intent. A lie told via a high-end CGI render is no more dangerous than a lie told via a grainy cell phone video or a printed flyer. Our obsession with the tech is a form of displacement activity. It’s easier to blame an algorithm than it is to address the systemic decay of civic education and critical thinking.

The Deepfake Boogeyman

"But what about the deepfakes?" the critics cry. "What if a video drops the night before the election showing a candidate taking a bribe?"

Imagine this scenario: A video surfaces of a candidate. It looks real. The candidate denies it. The media spends 24 hours debating its authenticity.

In a world where everyone knows AI can create anything, the "liar’s dividend" actually kicks in. This is a concept explored by legal scholars Bobby Chesney and Danielle Citron. It suggests that as the public becomes aware of deepfakes, actual villains can simply claim that real incriminating footage is fake.

The threat isn't that we will believe everything we see; it's that we will believe nothing. And while that sounds cynical, a healthy dose of skepticism is exactly what the modern voter needs. If AI forces the average person to stop trusting every "leaked" video they see on X (formerly Twitter), it has inadvertently performed a service to the republic.

Stop Trying to Fix the Ads, Fix the Audience

If we actually cared about the integrity of our elections, we’d stop trying to ban math—which is all AI is—and start focusing on the human side of the equation.

We need to stop asking "How do we stop AI ads?" and start asking "Why are voters so easily manipulated by them?"

The answer is uncomfortable. We have an education system that treats "media literacy" as an elective rather than a survival skill. we have a social media infrastructure designed to reward outrage over accuracy. And we have a political class that benefits from polarization.

AI is just a mirror. If you don't like the reflection, don't break the glass. Change the subject.

The Coming Era of Personalized Propaganda

The real disruption isn't the "fake video." It's the hyper-personalization of messaging.

$P = f(D, A)$

In this simplified model, the Power of Persuasion ($P$) is a function of your Data ($D$) and the Algorithm ($A$).

In the near future, you won't see the same ad as your neighbor. AI will analyze your specific grievances, your spending habits, and your fears to generate a custom-tailored video designed specifically to trigger you. This isn't a theory; it's the logical conclusion of the micro-targeting we’ve seen since 2016.

The "lazy consensus" says we should ban this. Good luck. You can't ban the intersection of data science and psychology. Instead of futile bans, we should be demanding total transparency in data harvesting. If a campaign knows you're afraid of losing your job to automation, they’ll send you an AI-generated ad about job security. The problem isn't the AI; it's the fact that they knew what you were afraid of in the first place.

Why the Tech Giants are Hypocrites

Google and Meta are talking a big game about "election integrity." Don't buy it for a second. These companies are in the business of selling attention. They love AI ads because AI-generated content is more engaging, which means more time on site, which means more ad revenue.

Their "restrictions" are a PR move to avoid antitrust litigation and further regulation. They are putting up a "Notice: Wet Floor" sign while they continue to pump the water. If they truly cared about misinformation, they would kill the engagement-based algorithms that prioritize viral lies over boring truths. But they won't, because that would kill their stock price.

The Brutal Reality

AI is going to saturate the next election cycle. There will be thousands of fake voices, synthetic crowds, and manufactured scandals. And you know what? Most of it will fail.

The "scare" relies on the idea that AI is some magical hypnosis tool. It’s not. It’s just content. And we are already drowning in content. We are becoming immune to the "shock" of the digital image. The more AI-generated garbage that enters the stream, the more people will retreat to trusted, human-vetted sources—or, more likely, they will just tune out the noise entirely.

The real winners won't be the ones with the best AI. They will be the ones who can prove they are human in a world of bots. Authenticity is the new gold standard, and AI is the furnace that is burning away the dross.

Stop worrying about whether an ad is "real." Start worrying about whether the person behind it has a soul.

Log off. Talk to your neighbors. Read the actual text of a bill. If you're relying on a 15-second video on your phone to tell you how to vote—whether it was made by a human or a machine—you’ve already lost.

Would you like me to analyze the specific AI policies of the major social media platforms for the 2024-2026 cycle?